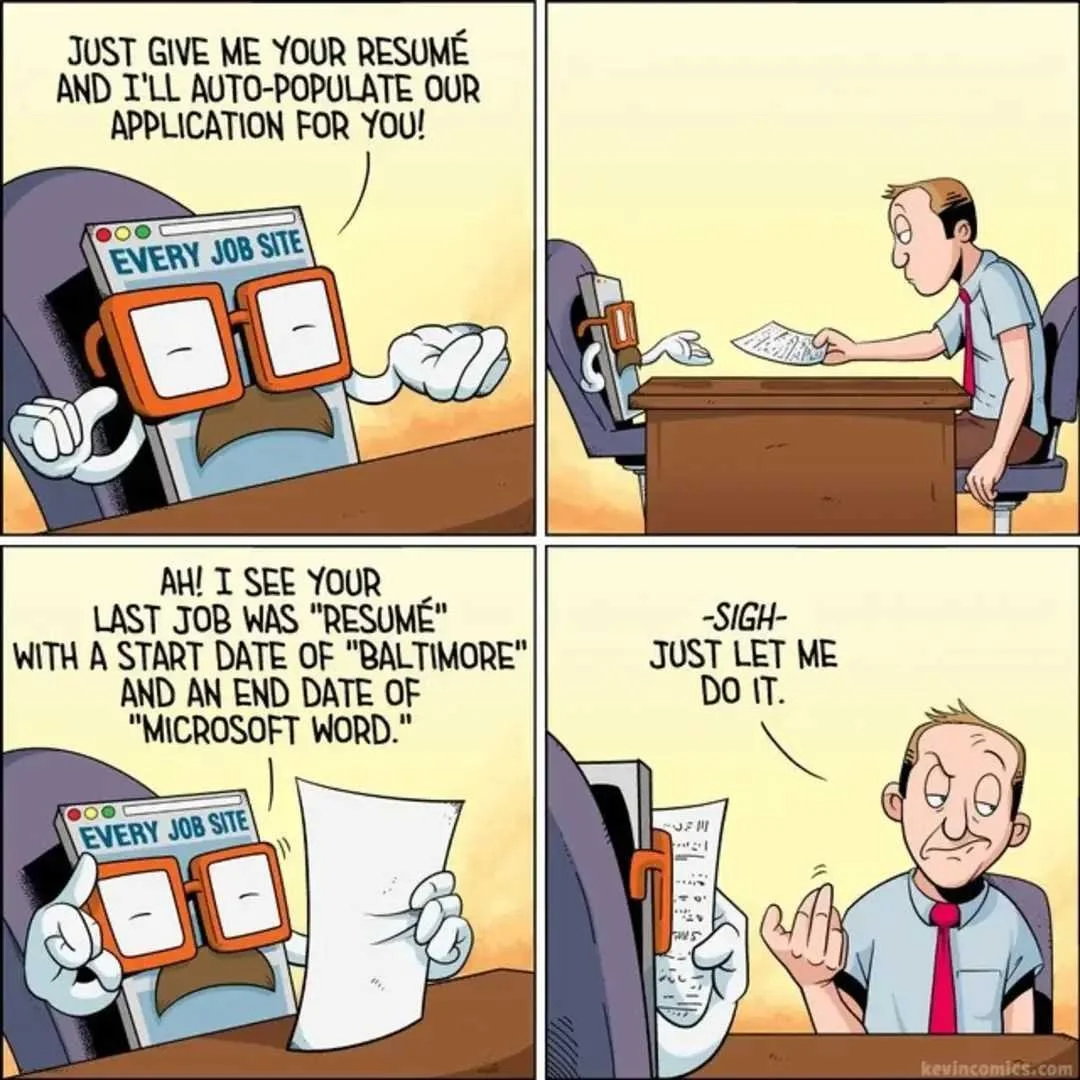

Every developer, no matter who or what the context, is going to run up against the dreaded “behavioral interview” segment in the interview pipeline with a company. When the market is hard like it was in 2023 and is in ‘24 (the time of this post) you need to prepare. As stated in the opening sentence, this advise and these breakdowns are targeted towards people attempting to land a software engineer/developer position. While some of this advise may be relevant to other fields, I would discourage drawing too many conclusions from them.

Preface

There is a big difference between small start ups and larger enterprises both in terms of what an interviewer is expecting from a candidate and the context in which they are asking the questions. At the smallest of the small, many start ups are staffed with generalists and development roles are expected to do many things with very little structure. At larger enterprises, developers focus on only the aspects of software; they may not even need to concern themselves with the CI/CD pipeline, monitoring, or even provisioning of resources (crazy, right!?) Make sure you are aware of the context around the interviewer when answering questions.

When being interviewed the standard practice has consolidated around the STAR methodology:

Situation - give the context but do not overwhelm with details

Task - plainly and clearly state the task (if there was one)

Action - this should be the bulk of your answer. Avoid “we” as this indicates you were a part of and not in charge of the decision making

Result - the positive outcome (always positive.) Do not leave a cliff hanger and tie all loose ends

Finally, make sure you are addressing your audience with a story which is relevant to them. If it is someone from Customer Success listening to you speak, don’t talk about the intricacies of RCP or the build system. Talk about something they could relate to. Keep the technical details light and the narrative around the work.

An Example Question(s)

The important part of each question is to ascertain what the interviewer is attempting to get out of asking it, e.g. what signal do they want to produce. Sometimes they’ll flip a question on you to see how you’ll respond to its corollary. So even though these are essentially the same question, the approach is radically different. Tread with care.

Tell Me a Time When you were tasked with solving a difficult problem and were successful

This is a chance for you to tell a story that positions you as a champion problem solver; one who is filled with grit, determination, intelligence, and expertise in the subject domain. Since it is a positive story, you need to ensure that in telling the story, you focus on the successful outcome and downplay interpersonal challenges you may have faced along the way. If there are aspects of your career or skill sets you want the interviewer to explore further, those things should be part of the answer to this question.

Areas of Focus

Communication Skills - How you identified and set expectations

Collaboration - How you engaged the team(s) involved in the project. Bonus points for inter-departmental problems.

Technical Knowledge - At a high level, why this was difficult

Areas to Avoid

Technical Details - while you lived it, they did not. Getting bogged down in details may steal the momentum of the story.

Historical Problems - No one wants to hear how awful the last developer was, how some library is junk or what poor decision making led to this problem. Skip it.

Failure - They asked for success. Everything succeeded. Right? Right!?

Tell About a Time You Were Given a Task but Failed

This is the opposite of the above question. However, unlike the previous question they aren’t waiting to hear how good of a story teller you are. They are looking for four important qualities: risk management, resiliency, a growth mindset, and communication. While it is about failure, it can not be a catastrophic failure and the underlying cause can not be some dysfunction within the organization. At the end of it, you must come out in some way, shape, or form “better.”

Areas of Focus

Learnings - It was a failure. What did you learn and how will you avoid this in the future?

Ownership - What was your role and responsibilities in the project

Outcome - What positive came out from the story (besides what you learned.) Even though this is about “failure” it must have a positive result

Areas to Avoid

Finger Pointing - don’t blame external factors. They can contribute to the problem or form the context around the story but they can not be the reason for the failure.

Negative Emotions - No one likes to fail. I get it. You can be angry or bitter about the event(s) but not during the interview. You must stay positive and upbeat the entire time.

Minutia - There might be some small details that you experienced which have been seared into your memory for time immemorial. You don’t need to tell all of them to the interviewer.

Conclusion

One story for each type of question is not enough. What an interviewer seeks and what information they’re attempting to gather depends on the type of question. Whatever the case, the story must have a start and end higher. Details can distract and hide the merits of your actions; be stingy and keep them pertinent.